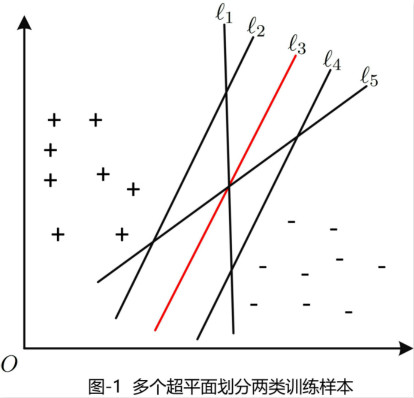

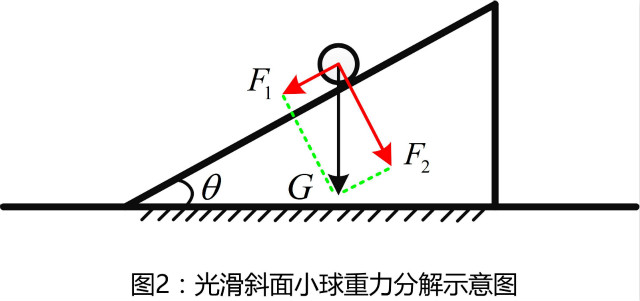

支持向量机定义的引出给定训练样本集$D=\left\{ {(\boldsymbol{x}_1,y_1),\cdots,(\boldsymbol{x}_m,y_m)}\right\},y_i\in \left\{ {-1,+1}\right\}$。分类最基本的出发点是找到一个超平面来区分训练样本集中的不同类别。事实上,可能存在很多这样的超平面。我们需要制定衡量标准(如:欧式距离)来确定最合适的超平面。

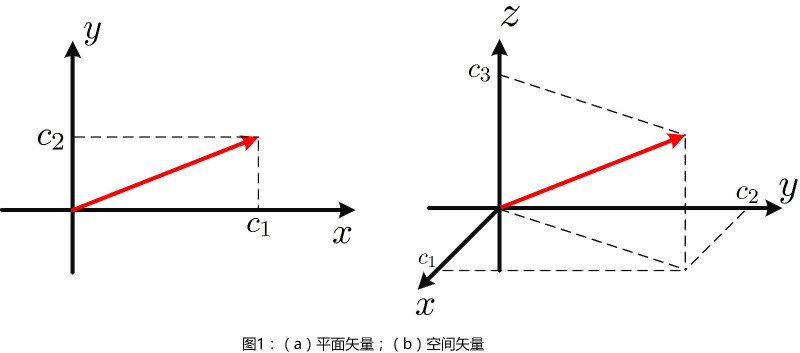

如图1所示的二维平面,直观上看,红色直线那个相对于其他更为适合,因为该直线对训练样本局部扰动的“容忍性”最好。现在,从数学角度来确定该超平面。

在样本空间中,超平面通过如下线性方程组来描述

\begin{align}

\boldsymbol{w}^T\boldsymbol{x}+b=0

\end{align}

其中$\boldsymbol{w}=\left\{ {w_1,\cdots,w_d}\right\}^T$为超平面法向量,决定超平面的方向;$b$为位移项,决定了超平面与原点之间的距离。样本空间中任意点$\boldsymbol{x}$到超平面$\boldsymbol{w}^T\boldsymbol{x}+b=0$的距离为

\begin{align}

r=\frac{|\boldsymbol{w}^T\boldsymbol{x}+b|}{||\boldsymbol{w}||}

\end{align}

空间任意点到超平面的距离,可以参考点到直线距离,类比得到。

假设超平面$(\boldsymbol{w},b)$能够将样本正确分类,即对于任意的$(\boldsymbol{x},y_i)\in D$,若$y_i=+1$,则有$\boldsymbol{w}^T\boldsymbol{x}_i+b>0$;若$y_i=-1$,则有$\boldsymbol{w}^T\boldsymbol{x}_i+b<0$。给定超平面,定义如下两个平面

\begin{align}

\left\{

\begin{matrix}

\boldsymbol{w}^T\boldsymbol{x}_i+b\geq +1,\ y_i=+1\\

\boldsymbol{w}^T\boldsymbol{x}_i+b\leq -1,\ y_i=+1

\end{matrix}

\right.

\end{align}

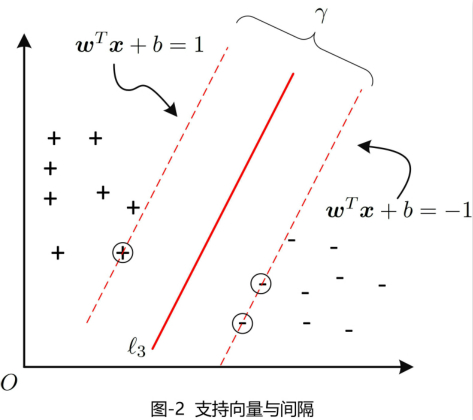

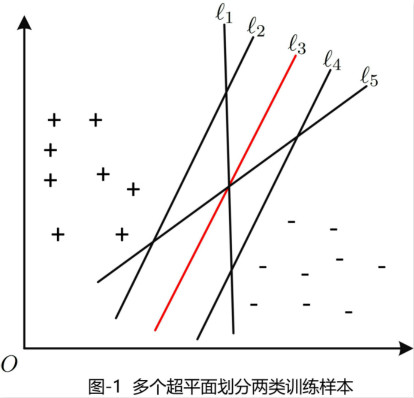

样本中,距离超平面距离最小的两个或者(在一类样本点中可能存在到超平面距离相同的点)训练样本点,我们称其为支持向量(support vector),两异类支持向量到超平面的距离之和,即两平面之间的距离,称之为间隔(margin)。间隔的代数表达为$\gamma=\frac{2}{||\boldsymbol{w}||}$,如图2所示。

为了增加超平面的鲁棒性,因此需要找到最大间隔的超平面,即

\begin{align}

\max_{(\boldsymbol{w},b)} \ &\frac{1}{||\boldsymbol{w}||}\\

\text{s.t.} \ &y_i(\boldsymbol{w}^T\boldsymbol{x}_i+b)\geq 1 \quad (i=1,\cdots,m)

\end{align}

该问题等价于

\begin{align}

\min_{(\boldsymbol{w},b)} \ & \frac{1}{2}||\boldsymbol{w}||^2\\

\text{s.t.} \ &y_i(\boldsymbol{w}^T\boldsymbol{x}_i+b)\geq 1 \quad (i=1,\cdots,m)

\end{align}

这就是支持向量机(support vector machine, SVM)的基本型。

对偶(Dual)

问题的引出

我们希望找到最大化间隔的超平面

\begin{align}

f(\boldsymbol{x})=\boldsymbol{w}^T\boldsymbol{x}+b

\end{align}

这里我们假设训练样本是线性可分的(上一节中,我们假设样本是两类的)。我们注意到,求解参数$(\boldsymbol{w},b)$本身就是一个凸优化问题,可以通过凸优化工具箱进行计算。另外,我们还可以通过解该问题的对偶问题,来得到最优解。这样所带来的好处是,将一种最优化(最小化)问题转化为另一种最优化(最大化)问题,而后者相对于前者更容易计算。

对偶问题

对于求解标准的优化问题

\begin{align}

\min \ &f_0(\boldsymbol{x})\\

\text{s.t.} \ &f_i(\boldsymbol{x})\leq 0, i=1\cdots,m\\

&h_j(\boldsymbol{x})=0, j=1,\cdots,p

\end{align}

其中$\boldsymbol{x}\in \mathbb{R}^n$。我们假设条件$f_i(\boldsymbol{x})=0$与$h_j(\boldsymbol{x})=0$所构成的定义域集合$\mathcal{D}$是非空的。并且假设$p^{\star}$是最优值。

拉个朗日对偶的基本思想,是将该优化问题增广成目标函数$L(\boldsymbol{x},\boldsymbol{\lambda},\boldsymbol{v})$,其中$L$表示映射$L:\mathbb{R}^n\times \mathbb{R}^m\times \mathbb{R}^p\rightarrow \mathbb{R}$。

\begin{align}

L(\boldsymbol{x},\boldsymbol{\lambda},\boldsymbol{v})=f_0(\boldsymbol{x})+\sum\limits_{i=1}^m\lambda_if_i(\boldsymbol{x})+\sum\limits_{i=1}^pv_ih_i(\boldsymbol{x})

\end{align}

定义拉格朗日对偶函数$g:\mathbb{R}^m\times \mathbb{R}^p\rightarrow \mathbb{R}$表示目标函数$L(\boldsymbol{x},\boldsymbol{\lambda},\boldsymbol{v})$关于$\boldsymbol{x}$的下确界

\begin{align}

g(\boldsymbol{\lambda},\boldsymbol{v})=\inf_{\boldsymbol{x}\in \mathcal{D} } L(\boldsymbol{x},\boldsymbol{\lambda},\boldsymbol{v})

\end{align}

此时,通过计算可知$g(\boldsymbol{\lambda},\boldsymbol{v})$是最优值$p^{\star}$的下界

\begin{align}

g(\boldsymbol{\lambda},\boldsymbol{v})\leq p^{\star}

\end{align}

这个结论很容易得到,假设$\tilde{\boldsymbol{x} }\in \mathcal{D}$,对于$\boldsymbol{\lambda}>0$,有

\begin{align}

\sum\limits_{i=1}^m\lambda_if_i(\tilde{\boldsymbol{x} })+\sum\limits_{i=1}^pv_ih_i(\tilde{\boldsymbol{x} })\leq 0

\end{align}

因此,我们可以得到

\begin{align}

L(\tilde{\boldsymbol{x} },\boldsymbol{\lambda},\boldsymbol{v})=f_0(\tilde{\boldsymbol{x} })+\sum\limits_{i=1}^m\lambda_if_i(\tilde{\boldsymbol{x} })+\sum\limits_{i=1}^pv_ih_i(\tilde{\boldsymbol{x} })\leq f_0(\tilde{\boldsymbol{x} })

\end{align}

即

\begin{align}

g(\boldsymbol{\lambda},\boldsymbol{v})=\inf_{\boldsymbol{x}\in \mathcal{D} } L(\boldsymbol{x},\boldsymbol{\lambda},\boldsymbol{v})\leq L(\tilde{\boldsymbol{x} },\boldsymbol{\lambda},\boldsymbol{v})\leq f_0(\tilde{\boldsymbol{x} })

\end{align}

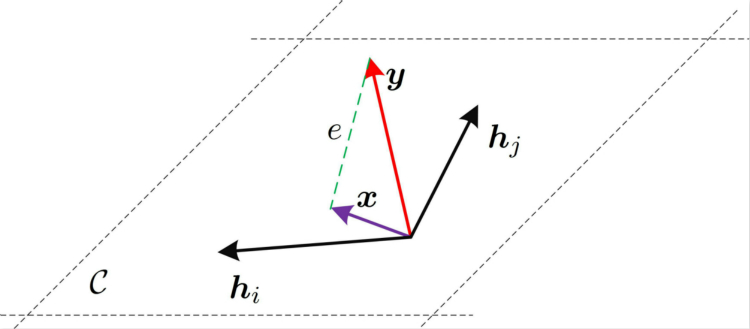

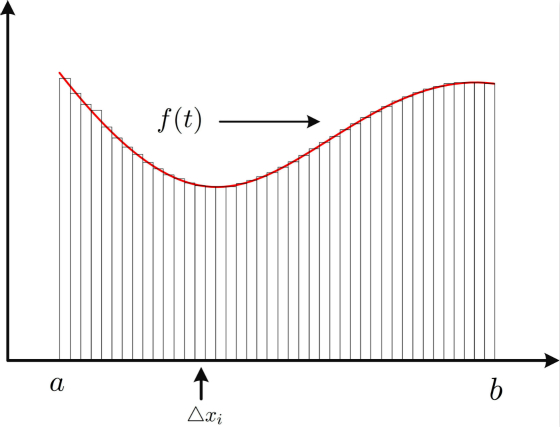

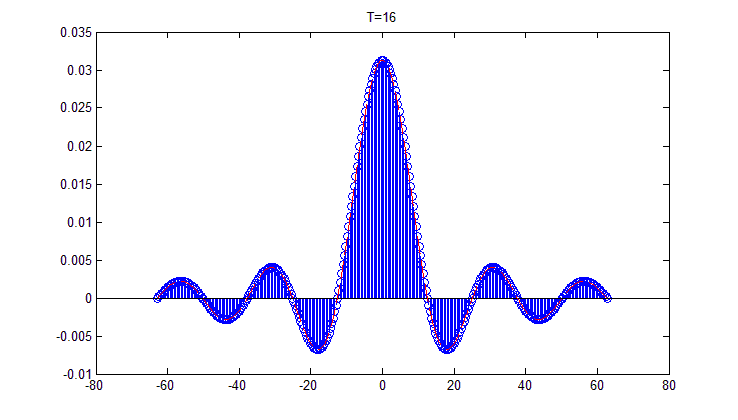

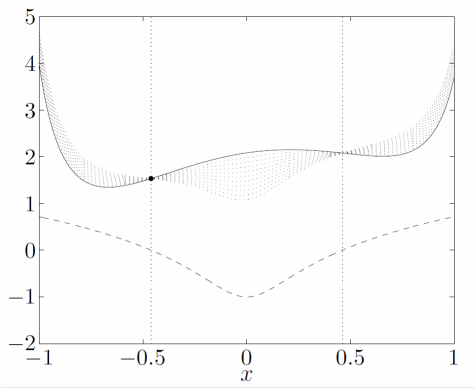

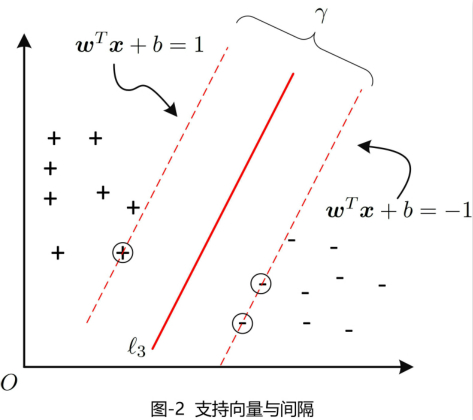

我们通过图3来进行理解。图中,实曲线表示$f_0(\boldsymbol{x})$曲线,虚曲线表示$f_1(\boldsymbol{x})$,由于限制条件$f_1(\boldsymbol{x})\leq 0$,因此$\boldsymbol{x}$的区间为$[-0.46,+0.46]$。显然该定义区间上的最优解为$\boldsymbol{x}^{\star}=-0.46,p^{\star}=1.54$。图中,带点的虚线族表达$L(\lambda,v)$,$\lambda=0.1,0.2,\cdots,1.0$。我们可以看到,$L(\lambda,v)$相对于$p^{\star}$更小。我们需要调整$\lambda$找到使得$L(\lambda,v)$最大的$p^{\star}$的下确界。

超平面的对偶问题

利用拉格朗日乘子法,得到目标函数

\begin{align}

L(\boldsymbol{w},b,\boldsymbol{\alpha})=\frac{1}{2}||\boldsymbol{w}||^2+\sum\limits_{i=1}^m\alpha_i(1-y_i(\boldsymbol{w}^T\boldsymbol{x}_i+b))

\end{align}

其中$\alpha_i\geq 0$。令$L(\boldsymbol{w},b,\boldsymbol{\alpha})$对$\boldsymbol{w}$和$b$的偏导数为$0$可得

\begin{align}

\boldsymbol{w}&=\sum\limits_{i=1}^m \alpha_iy_i\boldsymbol{x}_i\\

0&=\sum\limits_{i=1}^m\alpha_iy_i

\end{align}

代入$L(\boldsymbol{w},b,\boldsymbol{\alpha})$中,再考虑约束条件,有

\begin{align}

\max_{\boldsymbol{\alpha} }\ &\sum\limits_{i=1}^m\alpha_i-\frac{1}{2}\sum\limits_{i=1}^m\sum\limits_{j=1}^m \alpha_i\alpha_jy_iy_j\boldsymbol{x}_i^T\boldsymbol{x}_j\\

\text{s.t.}\ & \sum\limits_{i=1}^m\alpha_iy_i=0\\

&\alpha_i\geq 0, \ i=1,\cdots,m

\end{align}

解出$\boldsymbol{\alpha}$之后,即可得到模型

\begin{align}

f(\boldsymbol{x})

&=\boldsymbol{w}^T\boldsymbol{x}+b\\

&=\sum\limits_{i=1}^m\alpha_iy_i\boldsymbol{x}_i^T\boldsymbol{x}+b

\end{align}

由于该过程为拉格朗日对偶得出的解,因此,所得的解,还需满足KKT条件(found in boyd’s convex optimization)。

\begin{align}

\left\{

\begin{matrix}

\alpha_i\geq 0\\

y_if(\boldsymbol{x}_i)-1\geq 0\\

\alpha_i(y_if(\boldsymbol{x}_i)-1)=0

\end{matrix}

\right.

\end{align}

具体求解$\boldsymbol{\alpha}$的过程,是一个二次规划问题,具体方法如SMO(sequential minimal optimization)。SMO方法,每次更新$\boldsymbol{\alpha}$向量中的两个元素(如,$\alpha_i$和$\alpha_j$),固定其余参数。利用$\boldsymbol{\alpha}^T\boldsymbol{y}=0$得到$\alpha_i$和$\alpha_j$的更新。

核函数

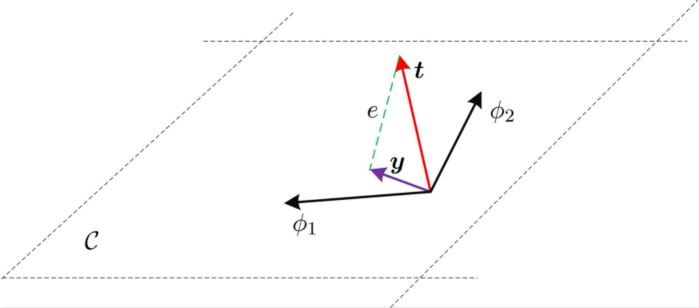

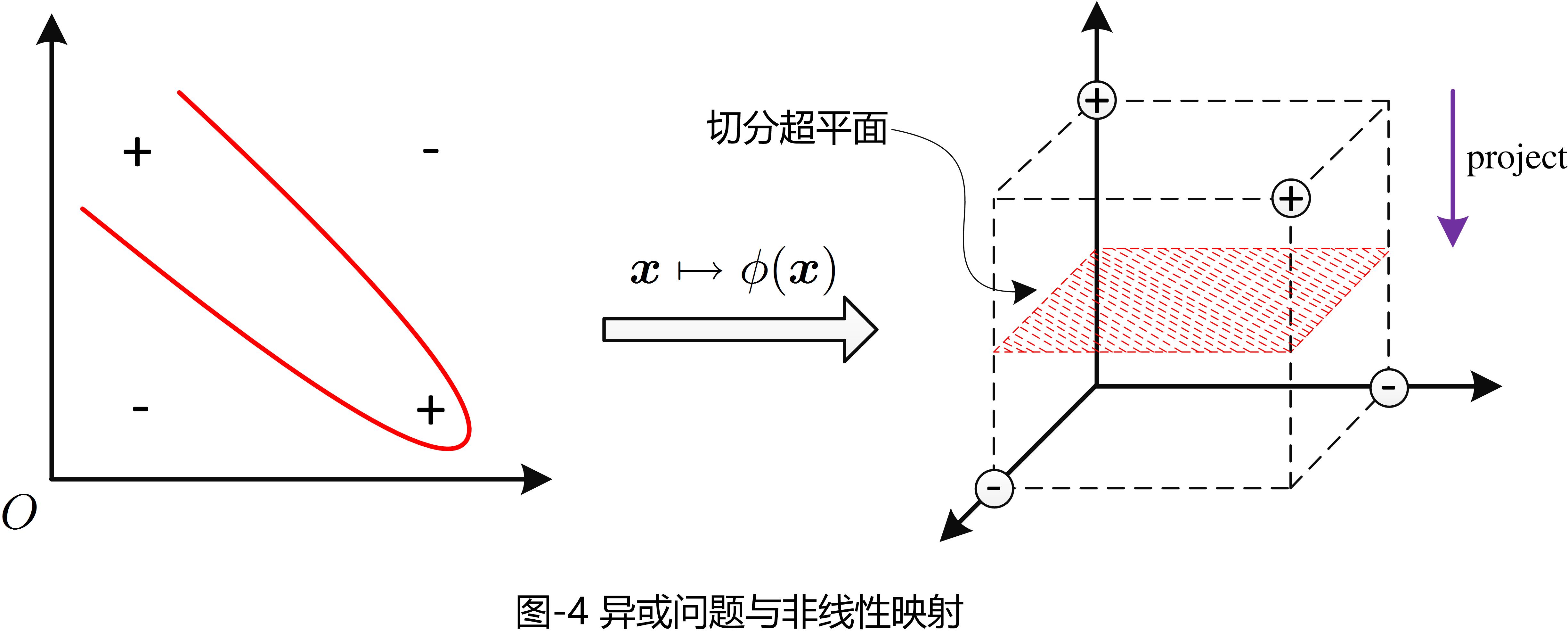

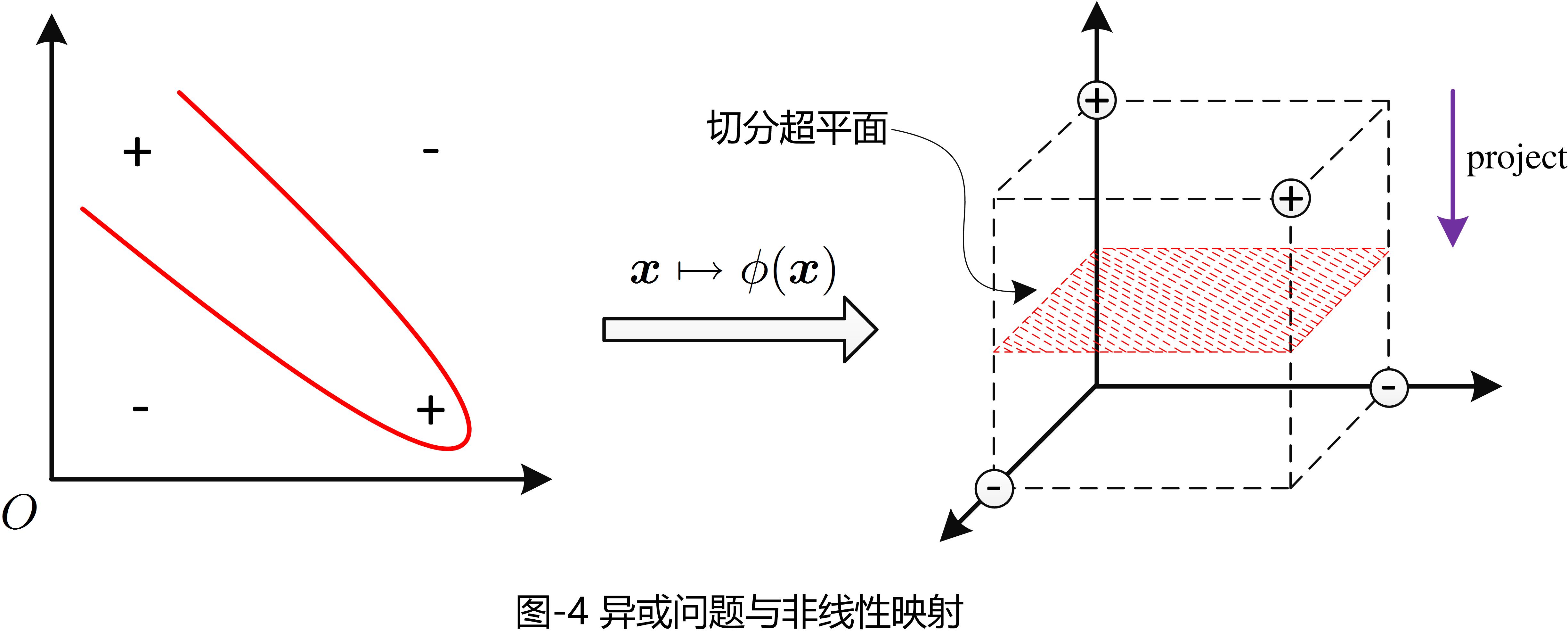

在之前的内容中,我们假设训练样本是线性可分的。然而实际中,在原始样本空间,可能并不存在这样一个能够完全正确划分两类样本的超平面,比如图4所示“异或”问题,通过将二维平面映射到三维空间,我们可以能够切分样本点的超平面。其中,三维平面按照紫色箭头的方向投影,可以得到原始的异或点。

对于这样的问题,通过将样本原始空间映射到高维空间,使得样本在高维空间中线性可分。如果样本空间是有限维的,那么一定存在一个高维空间使得样本可分。我们用$\phi:\mathbb{R}^n\rightarrow \mathbb{R}^p\ (p\gg n)$来表示这样映射,则映射之后的样本表示为$\phi(\boldsymbol{x})$。于是,我们设超平面方程为

\begin{align}

f(\boldsymbol{x})=\boldsymbol{w}^T\phi(\boldsymbol{x})+b

\end{align}

其中$(\boldsymbol{w},b)$是模型参数,类似于上一节知识,参数$(\boldsymbol{w},b)$是如下凸优化问题

\begin{align}

\min\limits_{(\boldsymbol{w},b)}\ &\frac{1}{2}||\boldsymbol{w}||^2\\

\text{s.t.} \ & y_i(\boldsymbol{w}^T\phi(\boldsymbol{x}_i)+b\geq 1)\ i=1,\cdots,m

\end{align}

其对偶问题,为

\begin{align}

\max_{\boldsymbol{\alpha} } \ &\sum\limits_{i=1}^m\alpha_i-\frac{1}{2}\sum\limits_{i=1}\sum\limits_{j=1}\alpha_i\alpha_jy_iy_j\phi(\boldsymbol{x}_i)^T\phi(\boldsymbol{x}_j)\\

\text{s.t.}\ &\sum\limits_{i=1}^m\alpha_iy_i=0\\

&\alpha_i\geq0, \ i=1,\cdots,m

\end{align}

其中$\phi(\boldsymbol{x}_i)^T\phi(\boldsymbol{x}_j)$的计算是非常复杂的,因此我们定义

\begin{align}

\kappa(\boldsymbol{x}_i\boldsymbol{x}_j)=\phi(\boldsymbol{x}_i)^T\phi(\boldsymbol{x}_j)

\end{align}

这里我们称$\kappa(\boldsymbol{x}_i\boldsymbol{x}_j)$为“核函数”。类似于上一节的知识,我们可以得到

\begin{align}

f(\boldsymbol{x})&=\boldsymbol{w}^T\phi(\boldsymbol{x})+b\\

&=\sum_{i=1}^m\alpha_iy_i\kappa(\boldsymbol{x},\boldsymbol{x}_i)+b

\end{align}

该式子表明,模型的最优解可以通过训练样本的核函数展开,这一展开式称为“支持向量展式”(support vecot expansion)

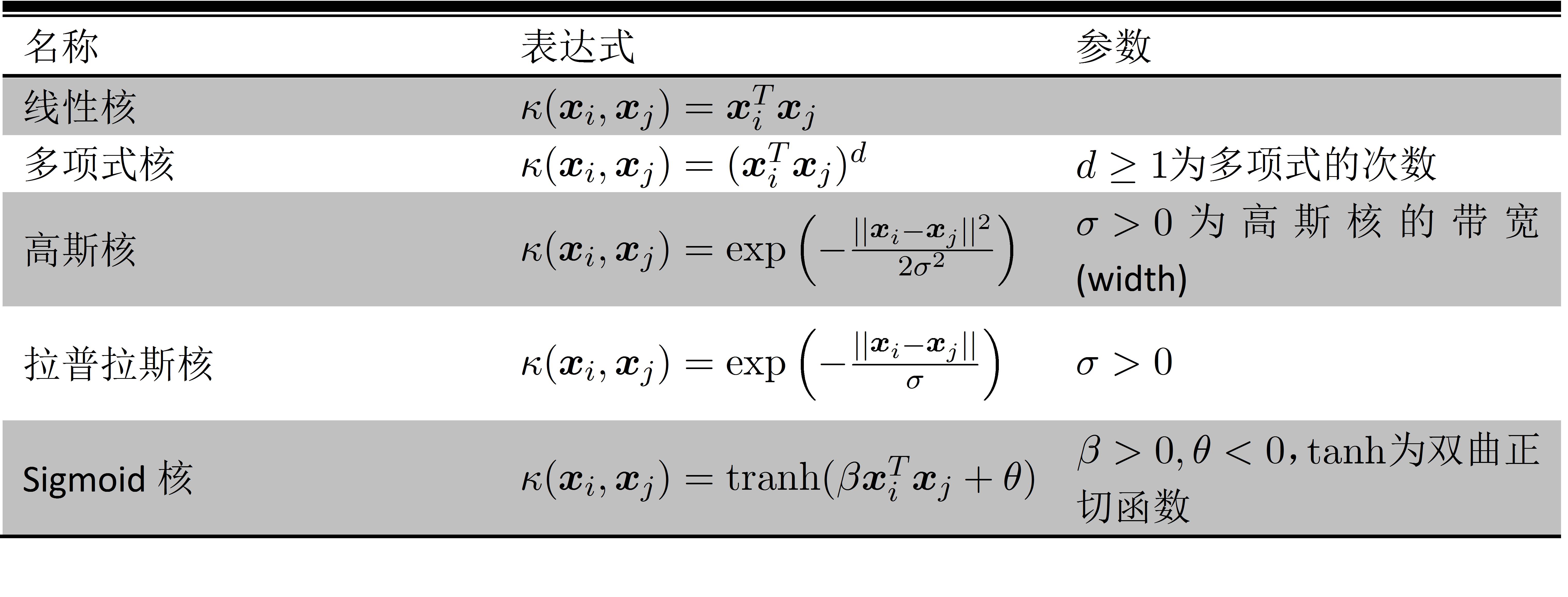

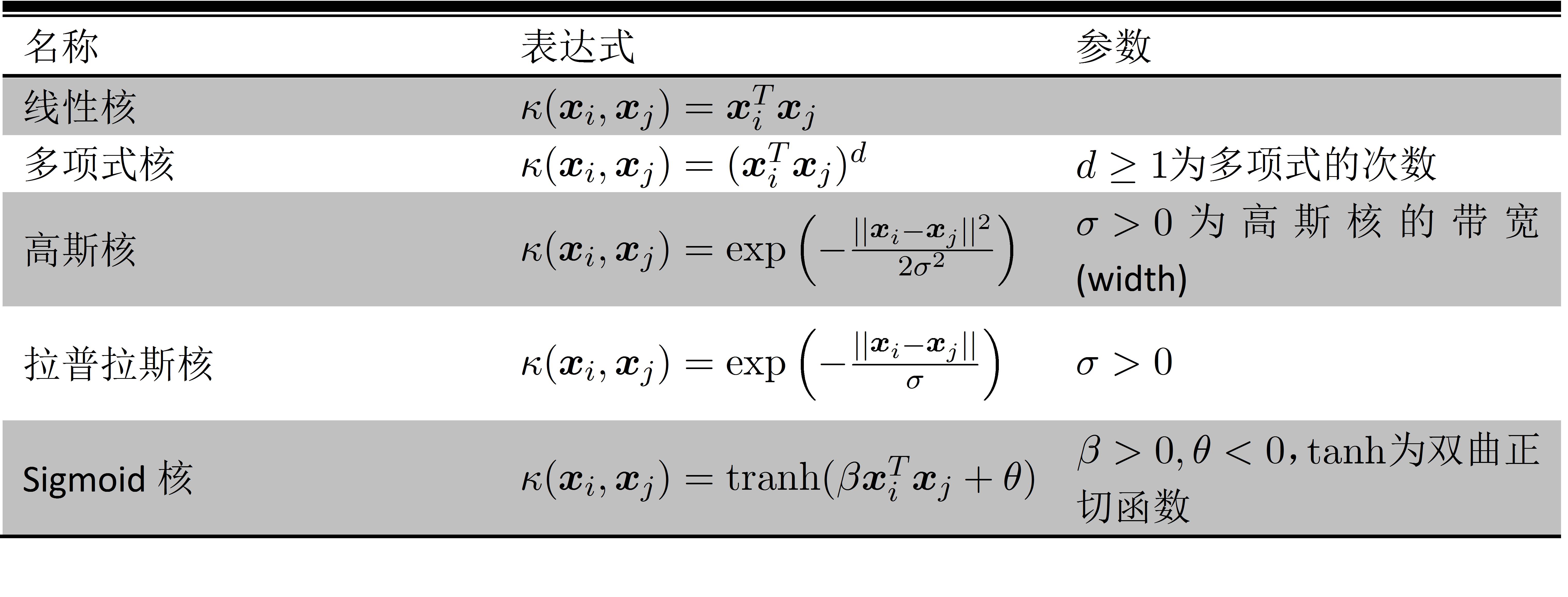

那如何确定核函数或者选择合适的核函数?需要注意的是,在不知道特征映射的形式时,我们并不知道什么样的核函数是合适的,而核函数也仅是隐式地定义了这个特征空间。因此,核函数的选择成为支持向量机最大的变数。常见的核函数有以下几种

在定义核函数的基础上,人们发展了一系列基于核函数的学习方法,统称为“核方法”(kernel methods)。最常见的,是通过“核化”(即引入核函数)来将线性学习器扩展为非线性学习器,如核线性判别分析(kernelized linear discriminant analysis, KlDA)。

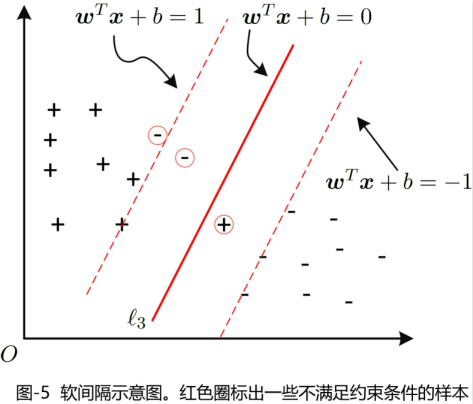

软间隔

在前面的讨论中,我们假设样本是线性可分的。然而,在现实任务中往往很难确定合适的核函数将训练样本再特征空间中线性可分退一步讲,即使恰好找到某个核函数使训练集在特征空间中线性可分,也很难断定这个貌似线性可分的结果,会不会造成过拟合(即,训练集效果好,测试集效果差)。

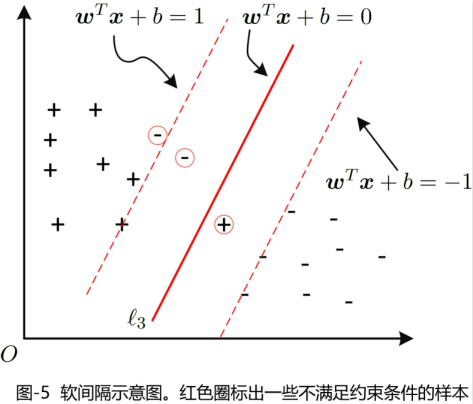

我们通过放松样本线性可分的条件,允许一些支持向量在少数的样本上出错。为此,引入软间隔的概念。如图5所示,这里不做赘述。

支持向量回归

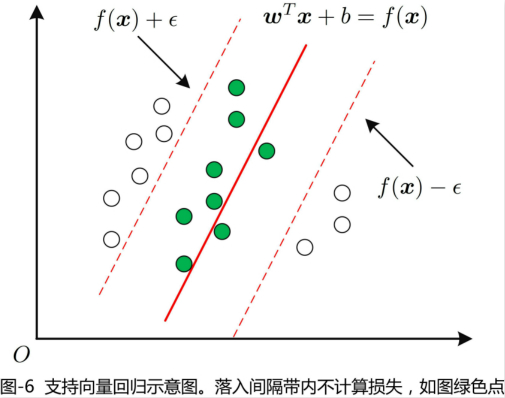

支持向量机所完成的工作是样本分类问题,即,将样本分成两个类,或者三个类。其目的是寻找一个超平面使得样本到超平面的间隔最大。而,支持向量回归,则是通过函数$f(\boldsymbol{x})=\boldsymbol{w}^T\boldsymbol{x}+b$来对样本进行拟合,即我们希望$f(\boldsymbol{x})$与$y$尽可能的靠近。

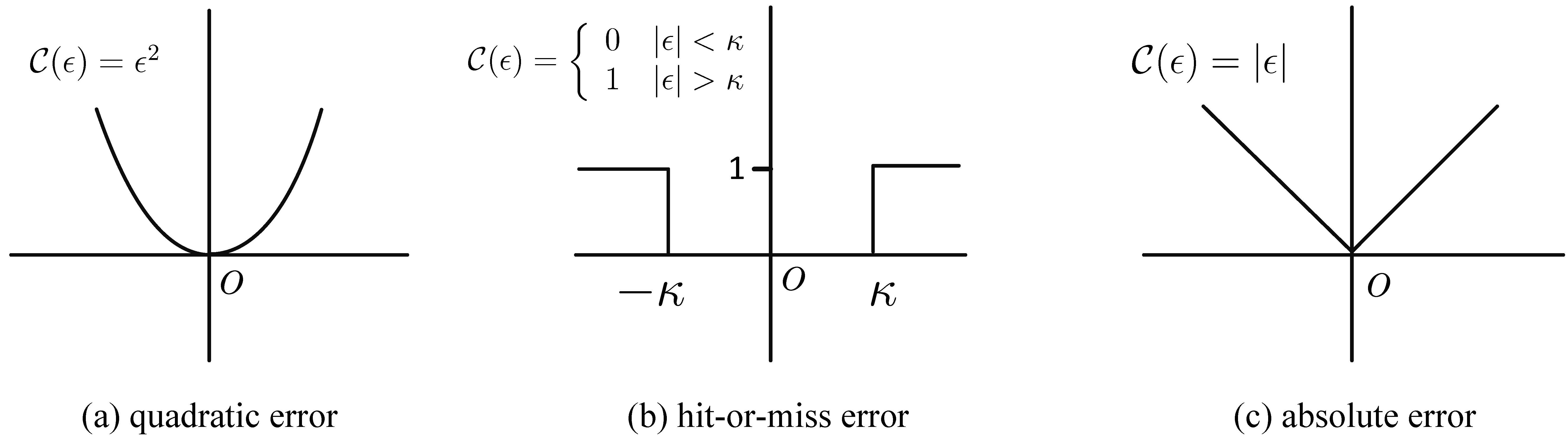

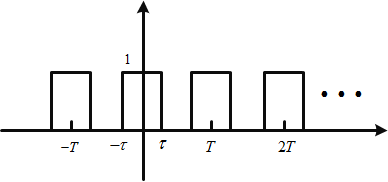

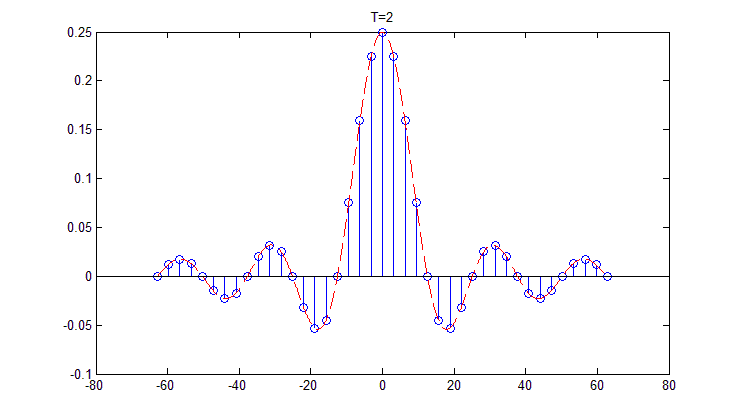

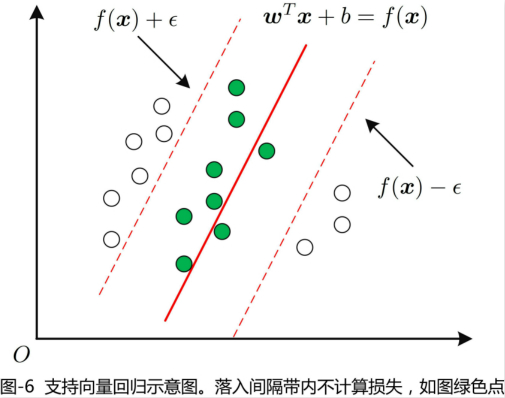

在回归问题中,我们通常采用模型输出$f(\boldsymbol{x})$和真实输出$y$之间的欧式距离来衡量回归的好坏。当且仅当$f(\boldsymbol{x})$与$y$完全相同,损失才为零。而,支持向量回归(support vector regression, SVR)放松了这个条件,SVR允许$f(\boldsymbol{x})$与$y$之间存在最多为$\epsilon$的误差,这就相当于以$f(\boldsymbol{x})$为中心,构建了如图6的一个宽度为$2\epsilon$的间隔带,若样本落入此间隔带中,则SVR认为是被预测正确的。

于是SVR问题形式化为

\begin{align}

\min_{\boldsymbol{w},b} \ &\frac{1}{2}||\boldsymbol{w}||^2+C\sum\limits_{i=1}^m\ell_{\epsilon} (f(\boldsymbol{x}_i)-y_i) \\

\text{s.t.}\ &f(\boldsymbol{x}_i)-y_i\leq \epsilon\\

&y_i-f(\boldsymbol{x}_i)\leq \epsilon

\end{align}

其中$C(C>0)$是常数,$\ell_{\epsilon}$是不敏感损失函数($\epsilon$-insensitive loss function)。

\begin{align}

\ell_{\epsilon}=\left\{

\begin{matrix}

0 & |z|\leq \epsilon\\

|z|-\epsilon &\text{otherwise}

\end{matrix}

\right.

\end{align}

通过引入松弛变量(或者称,惩罚因子)$\xi_i$和$\hat{\xi}_i$,则该SVR问题重写为

\begin{align}

\min_{\boldsymbol{w},b,\xi_i,\hat{\xi}_i} \ &\frac{1}{2}||\boldsymbol{w}||^2+C\sum_{i=1}^m(\xi_i+\hat{\xi}_i)\\

\text{s.t.}\ &f(\boldsymbol{x}_i)-y_i\leq \epsilon+\xi_i,\\

&y_i-f(\boldsymbol{x}_i)\leq \epsilon+\hat{\xi}_i,\\

&\xi\geq 0,\hat{\xi}_i\geq 0,\ i=1\cdots,m

\end{align}

类似地,我们通过拉格朗日法找到其对偶问题。通过引入拉格朗日乘子$\mu_i\geq 0,\hat{\mu}_i\geq 0,\alpha_i\geq 0,\hat{\alpha}_\geq 0$,由拉格朗日乘子法,可以得到拉格朗日函数

\begin{align}

L(\boldsymbol{w},b,\boldsymbol{\alpha},\hat{\boldsymbol{\alpha} },\boldsymbol{\xi},\hat{\boldsymbol{\xi} },\boldsymbol{\mu},)

&=\frac{1}{2}||\boldsymbol{w}||^2+C\sum\limits_{i=1}^m(\xi_i+\hat{\xi}_i)-\sum\limits_{i=1}^m\mu_i\xi_i-\sum\limits_{i=1}^m\hat{\mu}\hat{\xi}_i\\

&\quad +\sum\limits_{i=1}^m \alpha_i(f(\boldsymbol{x}_i)-y_i-\epsilon-\xi_i)+\sum\limits_{i=1}^m\hat{\alpha}_i (y_i-f(\boldsymbol{x}_i)-\epsilon-\hat{\xi}_i)

\end{align}

令$L(\boldsymbol{w},b,\boldsymbol{\alpha},\hat{\boldsymbol{\alpha} },\boldsymbol{\xi},\hat{\boldsymbol{\xi} },\boldsymbol{\mu},)$对$\boldsymbol{w},b,\xi$和$\hat{\xi}_i$的偏导为$0$,可得

\begin{align}

\boldsymbol{w}&=\sum\limits_{i=1}^m(\hat{\alpha}_i-\alpha_i)\boldsymbol{x}_i\\

0&=\sum\limits_{i=1}^m (\hat{\alpha}_i-\alpha_i)\\

C&=\alpha_i+\mu_i\\

C&=\hat{\alpha}_i+\hat{\mu}_i

\end{align}

代入,可得SVR的对偶问题

\begin{align}

\max_{\boldsymbol{\alpha},\hat{\boldsymbol{\alpha} }} \ & \sum\limits_{i=1}^m y_i(\hat{\alpha}_i-\alpha_i)-\epsilon (\hat{\alpha}_i+\alpha_i)\\

&-\frac{1}{2}\sum_{i=1}^m\sum_{j=1}^m(\hat{\alpha}_i-\alpha_i)(\hat{\alpha}_j-\alpha_j)\boldsymbol{x}_i^T\boldsymbol{x}_j\\

\text{s.t.} \ &\sum_{i=1}^m (\hat{\alpha}_i-\alpha_i)=0,\\

&0\leq \alpha_i,\alpha_j\leq C.

\end{align}

上述过程还需满足KKT条件,即

\begin{align}

\left\{

\begin{matrix}

\alpha_i(f(\boldsymbol{x}_i)-y_i-\epsilon-\xi_i)=0\\

\hat{\alpha}_i(y_i-f(\boldsymbol{x}_i)-\epsilon-\hat{\xi}_i)=0\\

\alpha_i\hat{\alpha}_0=0,\xi_i\hat{\xi}_i=0\\

(C-\alpha_i)\xi_i=0,\ (C-\hat{\alpha}_i)\hat{\xi}_i=0

\end{matrix}

\right.

\end{align}

若解出$\boldsymbol{\alpha}和\hat{\boldsymbol{\alpha} }$,最终可得

\begin{align}

f(\boldsymbol{x})=\sum_{i=1}^m (\hat{\alpha}_i-\alpha_i)\boldsymbol{x}_i^T\boldsymbol{x}+b

\end{align}

其中$b$可以由KKT条件以及$\boldsymbol{\alpha}$和$\hat{\boldsymbol{\alpha} }$得到。

若考虑特征映射$\phi$,即将样本空间映射到更高维的空间,则相应的形式为

\begin{align}

f(\boldsymbol{x})=\sum_{i=1}^m(\hat{\alpha}_i-\alpha_i)\kappa(\boldsymbol{x},\boldsymbol{x}_i)+b

\end{align}

其中$\kappa(\boldsymbol{x}_i,\boldsymbol{x}_j)=\phi(\boldsymbol{x}_i)^T\phi(\boldsymbol{x}_j)$为核函数。

]]>